More by Quan To:

Nobl9 Adds Support for Custom Labels Nobl9 Adds Role-Based Access Controls SLO Dashboard for Service Health Product Update: Keeping our foot on the gas pedal Nobl9 2022 Product Update What’s New in Nobl9 - Product Update for Q2 of 2021 Nobl9 2021 year in review How to Measure Uptime SLOs Using Nobl9 and Pingdom| Author: Quan To

Avg. reading time: 1 minute

Earlier in the year, Nobl9 launched Splunk Core integration to enable SLOs against your data in Splunk Enterprise. We provided the ability to pass in search queries to retrieve metrics such as status or return codes and leverage those to build SLOs and monitor your system through your Splunk logs.

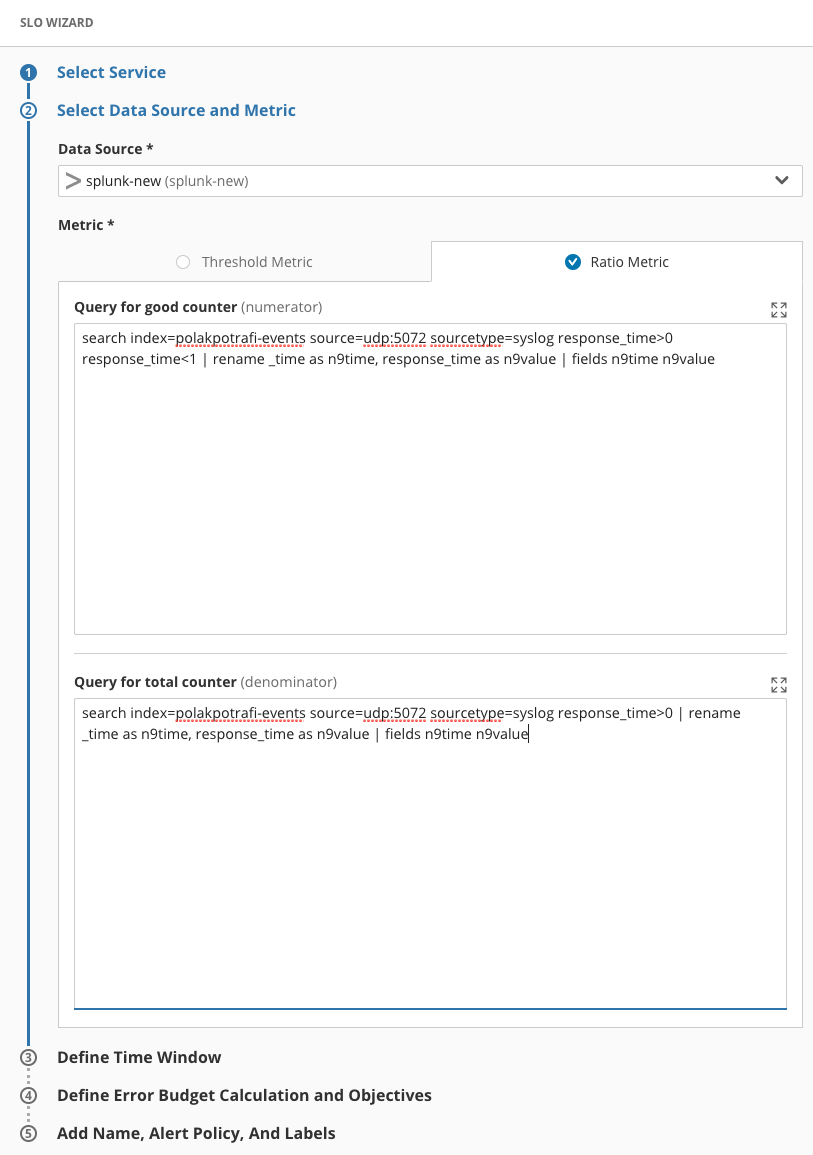

Today, I’m pleased to announce we extended that capability and support the full Search Processing Language (SPL) for Splunk. With this enhancement, the Splunk integration enables you to pass in SPL syntax to search, filter, and provide operations against your logs. This lets Nobl9 pull the appropriate data from Splunk for you to create SLOs.

For example, instead of looking for return codes > 400, you can now specify exact counts for a specific return code. You can build ratio metrics against all success calls vs. total API calls in a specific time window, or retrieve the average response time assuming the data is in your Splunk logs.

Within your Splunk Enterprise console, you can create your queries and copy and paste them into Nobl9 when defining your SLO. Note, Nobl9 does require you to use field extractions of n9time and n9value as shown in the example below. These two values allow Nobl9 to know which fields are the timestamp and the actual metric you want to build your SLO against. This follows the same model we use for other data source sources such as Google Big Query.

As with all Nobl9 data sources, you can also set up the data source using YAML and sloctl from the command line.

We’re continually extending our existing data sources and adding new ones so stay tuned for more announcements soon.

Splunk Enterprise with SPL support is available to all existing customers. For more details and other features released with 1.14, check out our release notes.

If you haven’t tried Nobl9 and want to take it for a test drive, visit us at nobl9.com/signup

Image Credit: Ross Sneddon on Unsplash.com

-1.png)

Do you want to add something? Leave a comment