More by Erza Zylfijaj:

Are You Ready For #SLOconf? Driving Cultural Shift Towards Site Reliability Engineering After SLOConf: A Conversation About Reliability Getting more from your SLOs with faster Workflows & Smarter Context SLOs Gone Wild: Surviving Service Level Chaos with Advanced Strategies How Two Enterprises Use Nobl9 and AWS to Stay Ahead of SLA Risk Can SLOs protect reliability when team experts leave? Nobl9 Named Finalist for CRN 2024 Tech Innovator Award in Application Performance and Observability Strategies and Business Benefits of Implementing Service Level Objectives (SLOs) Standardizing Reliability at Scale with Nobl9 and AWS Navigating Service Level Objectives and Graceful Degradation: A Webinar with Stanza, Google, & Pagerduty Is MTTR Dead? Why SLOs are Revolutionizing Reliability.| Author: Erza Zylfijaj

Avg. reading time: 4 minutes

Steve McGhee, Reliability Advocate at Google, recently spoke at SLOconf (the first-ever SLO conference for SREs) about the math used when developing SLOs. Steve started his career as an SRE at Google for about 10 years. He worked on things like mobile search, Android, Google Fiber, YouTube, and Compute Engine. He then left Google and became a cloud customer, learning a lot about the differences between operations inside and outside Google. He then returned to Google as a Solutions Architect, where he helped design adoption patterns for Anthos and helped customers with DevOps and necessary systems like incident response, risk management, capacity planning, and even cluster upgrades, which led to his current position in reliability advocacy. He also now helps internal Google platform developers better understand what customers are really looking for in their cloud provider.

(Below is a condensed summary of Steve’s SLOconf talk)

You probably already know what an SLO is, but if not, here’s a quick video to get you started. SLOs can be daunting at first. In fact, when I was a cloud customer, the one thing I wasn’t able to accomplish was introducing SLOs. We struggled with the concept and the implications of our SLO choices on a large, poorly understood stack of connected services managed by disparate teams. What if we chose the wrong SLO? Will the other teams not be able to meet our expectations? Were they going to get mad? Since those early days of uncertainty, I’ve helped a lot of teams go through this same struggle and found a few helpful guidelines to help you get through this a lot smoother.

First off, you want to set the expectations for customer experience. Your users deserve the level of service they expect, and you deserve to know if you’re meeting that expectation. Is it available enough? Is it fast enough? Is it complete enough? Just start with that. Don’t expect it to be perfect. Of course, it’s a bit more complicated than that.

Your users deserve the level of service they expect, and you deserve to know if you’re meeting that expectation.

Bad Math

What if you don’t own your entire stack? How do you deal with that? How do we think about all this stuff? Well here’s one way:

This is what I like to call “naive math.” Do we really expect layers and layers to get stronger and stronger as we go down? Do we have to add a nine at each layer? Is any of this even possible? Clearly not – this can’t be the way that things work. So what’s going on? I call these the pyramids or triangles. The classic IT strategy is to make a huge cold room, fill it with expensive computers, give it a lot of redundant power and network supplies, never let it get too warm, and never turn it off. Put costly hardware in there that will never fall apart and very specific software that has been well-tested and will never make any mistakes, lock the door, and never touch it again.

So what’s wrong with this? It works for the airlines. Why shouldn’t everyone else do this? It turns out that things like scalability and flexibility are missing, and then security is mostly hard because if you don’t have flexibility, you can’t adapt to new threats. Things like adding machines to this perfect room are really tricky and cause expensive outages. And by adding features to this perfectly tested software, you introduce more risk. So you have to test it again, which slows things down and causes problems. We call this approach “component level reliability.” The expectation is that everything is up as much as possible, and you’re aiming for total availability. Generally speaking, you also tend to scale “up” here; that is, you increase the machine size or speed but leave the topology.

The Cloud

So now we have this other model – the cloud. In this case, what we’re doing is using what we like to call SLO-based reliability at many layers. You can think of this as probabilistic reliability or “aggregate availability.” If you can ensure a consistent level of imperfect reliability, you get a lot of flexibility and scalability, giving you more security and overall more reliability. So if you’re aiming for something like 99.9%, you’re not aiming for 100%.

Systems architectures have gotten more complicated, as well. We’ve seen the rise of services-oriented architecture and microservices. We want to make sure we’re taking advantage of their virtues and not just shuffling these new things onto the old systems. At this point, we have a distributed system. This means things are getting complicated. Maybe you’ve heard this quote from Dr. Leslie Lamport, “a distributed system is one in which the failure of a computer you don’t even know existed can render your own computer unusable.” So now we have these different capabilities like horizontal and vertical scaling, sharding and partitioning of data, and corresponding replication and load balancing. All of this makes it much harder to even reason about our system. So what do we do? Math!

Let’s Do Some Math

Let’s talk about probability. Pretend you have some simple dice with multiple sides. Let’s say that rolling a number one is “an outage,” so if we roll six-sided dice, the probability of not having an outage is five divided by six, which equals 0.833. This is essentially our availability SLO. So this is the expected ratio of rolls that we consider good or “happy.” It’s a happy customer who doesn’t get a one, for example. And this is what you would measure over time, in different rolls. Now let’s imagine we have four services that are required to be be up, so now we roll four dice every time, the probability changes – every time you see a “one” in any of those four, that’s an outage. In this case, we get the availability of 0.482, which is not as good. And finally, if we use many-sided dice (in this case, two 6-sided, one 10-sided, one-20 sided), you have a measly 0.59 SLO. The moral here is the more dice you add to the throw; you’ll never do as well as the worst case of a single throw. It all comes down to if your architecture allows you to use “Intersection” or “Union” math. If you can have independent services or “soft dependencies,” everything gets much better. A colleague was going over my talk with me, and she asked me when you would define an SLO for a system versus its components? And I thought, “exactly.” The whole point of this is that I want you to design the SLO for a system at the front door. Try to focus on SLOs for your customer and don’t worry about the implications on your dependencies, tackle that separately. You don’t want to break up the system based on Conway’s law. You don’t want to break it up based on where the manager is or which team owns which part. Try to think about the system boundaries in terms of scalability or where the data is replicated, etc. Focus on building more-reliable things on less-reliable things. In the end, you want to build a platform that lets you focus on customer happiness, and I promise all else will follow.

Watch Steve’s full talk below:

Contact Nobl9 today for more help with the tough math.

Image credit: Photo by Jeswin Thomas on Unsplash

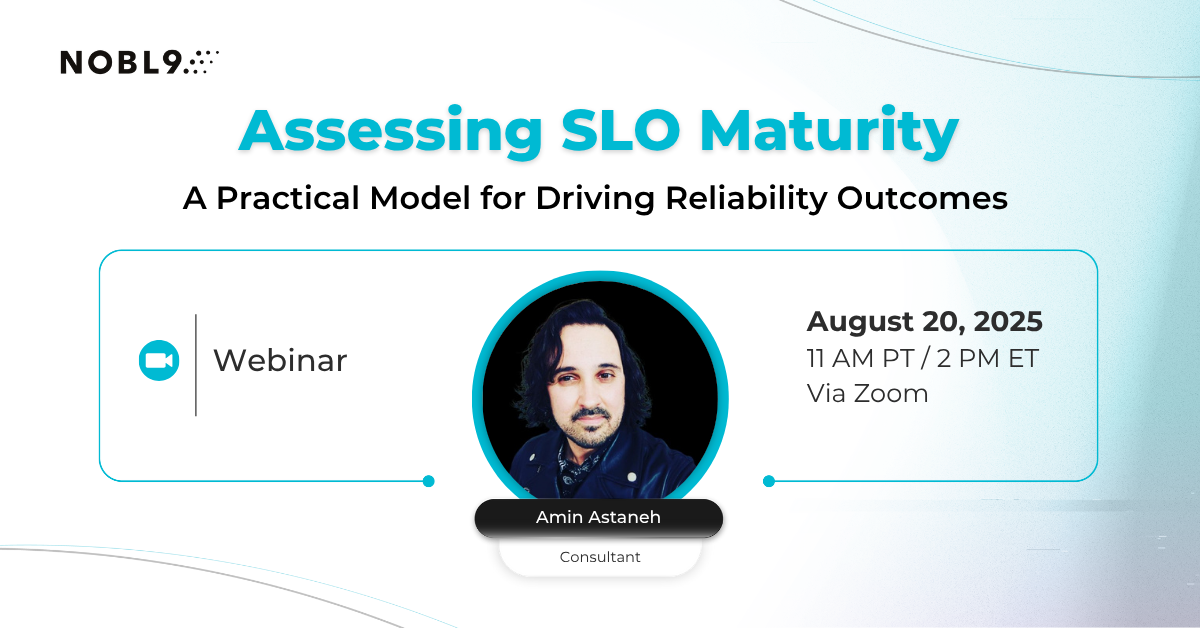

.png?width=1200&height=628&name=Building%20Reliable%20E-commerce%20Experiences%20(34).png)

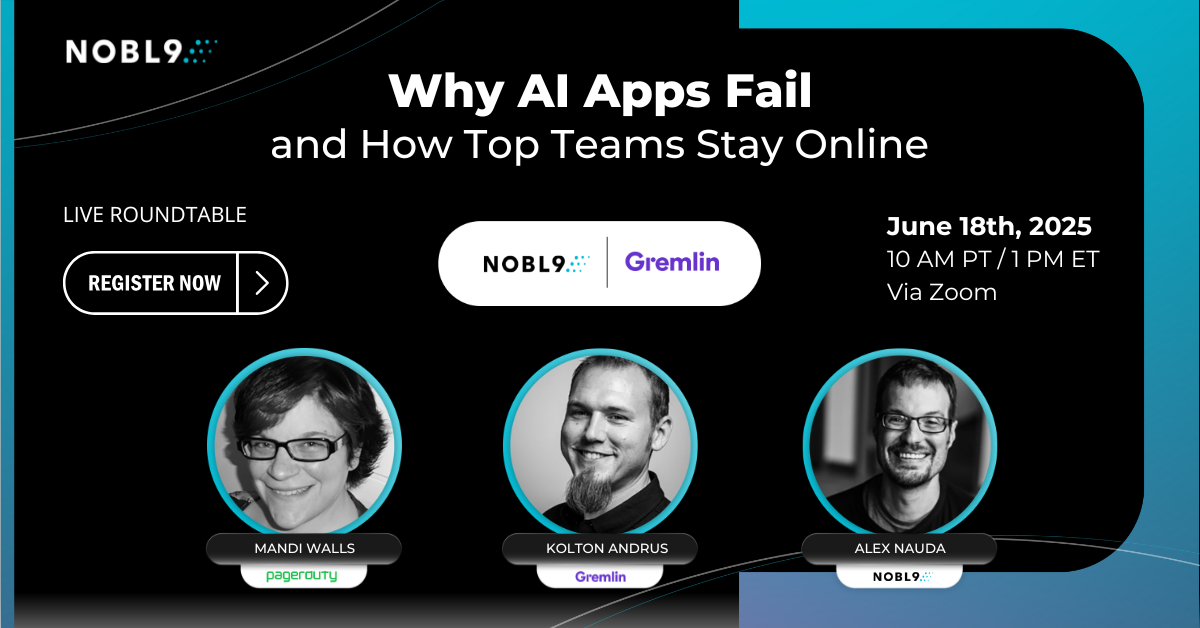

.png?width=1200&height=628&name=Building%20Reliable%20E-commerce%20Experiences%20(36).png)

Do you want to add something? Leave a comment